Beyond M2M to Enterprise IoT

For several years, M2M platforms have provided reasonable solutions for connecting machines to cloud services (actually it should be M2C, as M2M platforms generally do not support peer-to-peer device communications). But these platforms have struggled to create large markets or provide strategic enterprise-wide solutions. They have mostly been restricted to providing vertical/tactical applications -- in effect self-contained ‘stovepipe’ systems.

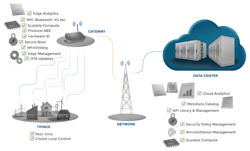

But to fully exploit the potential of the IoT, data must be free to flow to wherever in the system it can add value, e.g. between ‘edge’ devices for control purposes, to gateways for data aggregation/ingestion and local analytics, to cloud-based applications for Big Data analytics, to enterprise systems for OT/IT alignment and supply-chain integration, to mobiles for on-demand data delivery to employees (see Figures 1 and 2). The promise of Enterprise IoT is the new value created through ubiquitous data availability (and its processing by applications into actionable insights), but this means a new generation of platforms is required to provide the data-connectivity to support a new generation of distributed IoT applications.

Figure 1. A Layered Enterprise IoT System Architecture

One of the biggest differences between traditional M2M and Enterprise IoT systems is that ‘horizontal’ as well as ‘vertical’ data-flow must be supported. Vertical silos of data do not provide the potential to add value beyond a specific sub-system, so a fundamental feature of next-generation IoT platforms will be a data-connectivity layer that supports system-wide data-delivery as required: the right data, in the right place, at the right time, system-wide.

Learn more about data connectivity at PrismTech's Smart Industry 2015 breakout sessions:

- Bridging the IT/OT Divide: Field-to-cloud Implementations

- Buy or Build? Gain Scale and Speed with IIoT Platforms & Services

There are many potential ways (control, analytics, dashboards, event processing, mobile apps, etc.) to exploit all this newly accessible IoT data, but it needs to be delivered to the appropriate application in a timely manner wherever in the system that application may reside (on an edge device, gateway, enterprise server, tablet, or in the cloud). Only then can the data be converted into new ‘actionable insights’ and thus new business value.

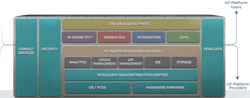

Figure 2. End-to-end IoT System Functionality: Providing intelligent data-connectivity for end-to-end systems embracing Things, gateways, enterprise servers, cloud services, mobiles, etc. to support Enterprise IoT solutions.

To provide this underlying capability, a data-connectivity layer needs to be deployed across all nodes the in the system -- at least all the nodes that are required to share data (publish and/or subscribe). An enterprise version of Twitter for Things, in effect.

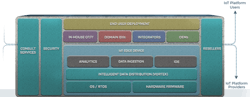

In simple terms, the diagrams in Figures 3 and 4 show, respectively, how this layer can be deployed both in the cloud (to support cloud services) and on devices (Things, servers, PCs, mobiles, etc.). They also show potential sources of the applications the platform connects (end-user developers, ISVs, SIs, OEMs).

Figure 3. IoT Cloud Services Environment: PrismTech's Vortex provides the intelligent data-connectivity between the functional components within a cloud PaaS offering for Enterprise IoT solutions.

[Note that the data-connectivity layer supports not only inter-node data-sharing, but also data-sharing between the application components of the IoT platform itself, i.e. inter-operability between platform services (such as IDEs, edge-device management, API management, analytics engines, etc.) as well as between Things]

Figure 4. IoT Edge-Device Environment: Similar to the PaaS offering, PrismTech's Vortex provides the intelligent data-connectivity between functional components in an IoT device and other devices, sub systems and cloud services for Enterprise IoT solutions.

This data-sharing layer must support multiple protocols (see Figure 1 above and http://www.prismtech.com/vortex/vortex-gateway), but its core protocol needs to be data-centric, not simple messaging. Enterprise systems need a range of Qualities-of-Service (content-based prioritization, automatic discovery, scalability, throughput) that are not supported by basic protocols like MQTT or CoAP. Hence Vortex is based on DDS the IoT protocol with the best QoS support, yet (as implemented in Vortex) also very efficient, low overhead, easy-to-configure and with excellent tools. So Vortex supports data ingestion from many, many protocol sources via Gateway, but uses DDS as its core protocol to ensure enterprise-level QoS.

And as literally billions of new connected Things are deployed in enterprises during the next few years, and as existing enterprise sub-systems are connected by an IoT backbone, a new data-connectivity approach is needed to reap value from all this data.

We read much about new ‘Things’ (intelligent, connected edge-devices) and much about ‘Cloud Services’. But the real key to unlocking the value of Enterprise IoT is on-demand data-delivery system-wide, to applications where they can be best utilized to generate new insights and thus opportunities for enhanced control, process optimization, asset productivity. In turn that will result in new services, lower costs and less waste. Data generation (Things) " data delivery (IoT platforms) " data analysis (applications) " actionable insights (visualization) " business value (top-line, bottom-line and societal).

Enterprise IoT is forecast to be a $1T market by 2020 (by IDC and others) whereas the M2M market today is but a small fraction of that. The gap is best explained by the difference between narrow, tactical, vertical integration (M2M) and broad, strategic, horizontal/vertical integration (Enterprise IoT). To make the transition, enterprises need to deploy data-connectivity platforms that support system-wide data-delivery. Without timely delivery of the raw material (data) how can you create value from it? So Enterprise IoT is all about 1) the data, 2) the platforms to deliver them, and 3) the apps to extract business value from them.

Steve Jennis is the Senior VP of Corporate Development for PrismTech and a member of the Advisory Board and Program Committee for the Smart Industry Conference and Expo. PrismTech is a provider of system solutions for the Internet of Things, the Industrial Internet and advanced wireless communications. Learn more about their Vortex intelligent data-sharing platform at www.prismtech.com/vortex.