Augmented Reality Training: Worth the investment?

According to research from The Boston Consulting Group, “The average U.S. high-skilled manufacturing worker is 56 years old.” Meaning that the knowledge that these workers possess needs to be handed down in a way that is actionable and accessible to new employees just entering the workforce.

But new workers find it difficult to prepare for future needs when they don’t know what tools they will be using in, say, three year’s time, said Paul Davies from Boeing during a discussion on workforce enablement during Smart Industry 2015 in Chicago this month. Davies, Associate Technical Fellow in the Advanced Production Systems group at Boeing Research & Technology, is currently the principal investigator for technology development projects in augmented reality, machine vision and advanced visualization techniques in the manufacturing domain.

“We look for people constantly willing to learn new things and to not be satisfied with the status quo, but being open to change. That has worked out well for me and for my team,” he explained.

In the past, operators were taught using a manual paper-based or flat human-machine interface (HMI) visualization screen. Now with virtual reality (VR) programs, the worker can be fully immersed in the environment. This immersion means that training can happen faster because students retain more information when they learn through the more engaging VR program.

[callToAction]

But if the training method being used at your plant right now seems sufficient, how do you justify the expense of improving training with new virtual technologies?

Davies was part of a research project that aimed to prove just what the difference between static training and more active VR training might mean. He worked with a group at Iowa State University to test the value of augmented reality (AR) training over more traditional training methods.

AR training provides a direct or indirect view of a physical, real-world environment whose elements are augmented by computer-generated input. In this case, the augmentation was the imposition of new parts as positioned on a 3D model.

Student participants were divided into three groups and asked to put together a mock airplane wing. One group had instructions on a desktop computer, another on a mobile tablet. The last group had the instructions converted to an AR registered as an overlay on the assembly. In this case, a blue digital object in the form of a 3D model is rendered on the screen in the proper position for assembly, as if it were in the physical world.

Students in the study ranged from 18 to 44 years of age, both male and female, and both engineering and non-engineering majors. Interestingly, the results of the study show no significant difference between number of errors or completion speed of students across the different demographics.

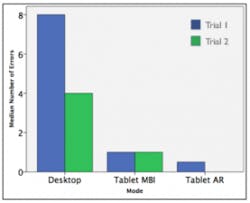

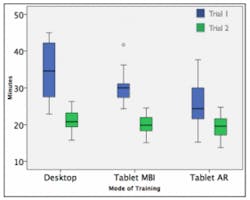

The testers found that the group using the AR instructions experienced a 90% reduction in the number of errors during the assembly when compared to the group using instructions on the desktop computer. The time to build the wing was also reduced by about 35%.

Breakdown of errors by instruction delivery mode shows an impressive reduction of errors in the Tablet AR mode over both Desktop and Tablet MBI.

Amount of time spent on each mode, indicating that most AR participants completed the assembly task faster the first time compared with other assembly modes.

For a more detailed account of the study, see the full paper, “Fusing Self-Reported and Sensor Data from Mixed-Reality Training.”